Hasky Annotation Tool

Hasky uses self supervised training techniques to significantly reduce the need for text annotation.

Split major workflows up

- Uploading a dataset

- Reviewing a dataset

- Editing a dataset

- Labelling data

- Adding/deleting data

- Uploading the dataset

- Selecting a model

- Running the model

- Labelling the data

Improve readability of tables

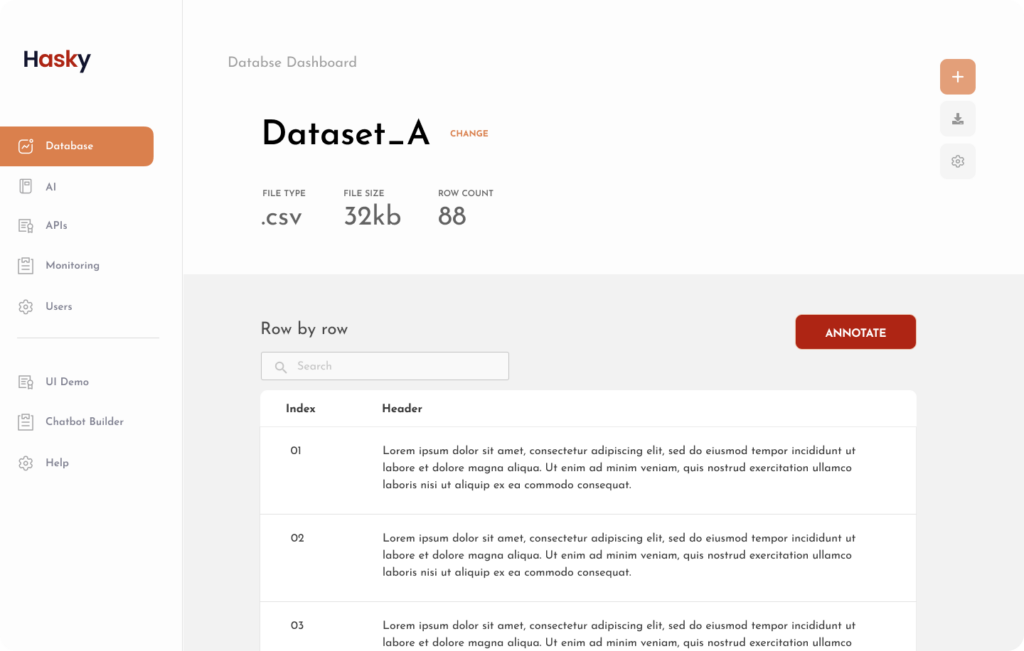

Tables that contain different types of data, require different design structures and layouts. For Natural Language Processing tools, datasets are often just spreadsheets of text-heavy columns and rows.

Hence, it is important to have well designed tables that contain a lot of text, so users can scan, read, and parse through data easily.

The re-designed dataset workflow features a spreadsheet with a header section that provides users with important context – name of the file, file type, file size, and number of rows within the dataset.

The re-designed table also features the dataset in styled rows of increased line height and padding around the cells. This improves the readability of text-heavy rows.

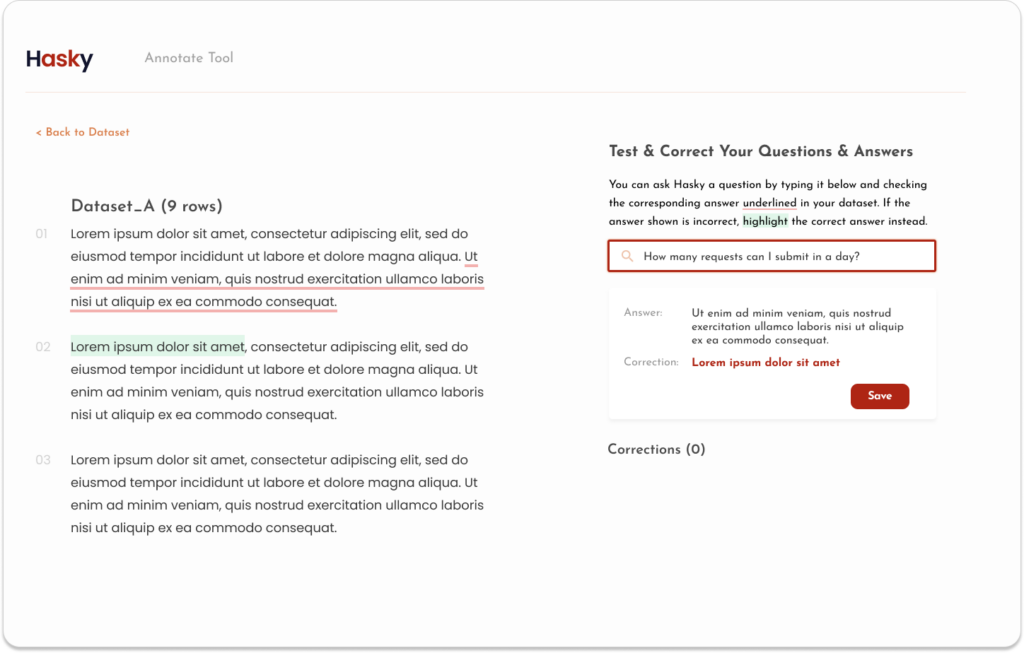

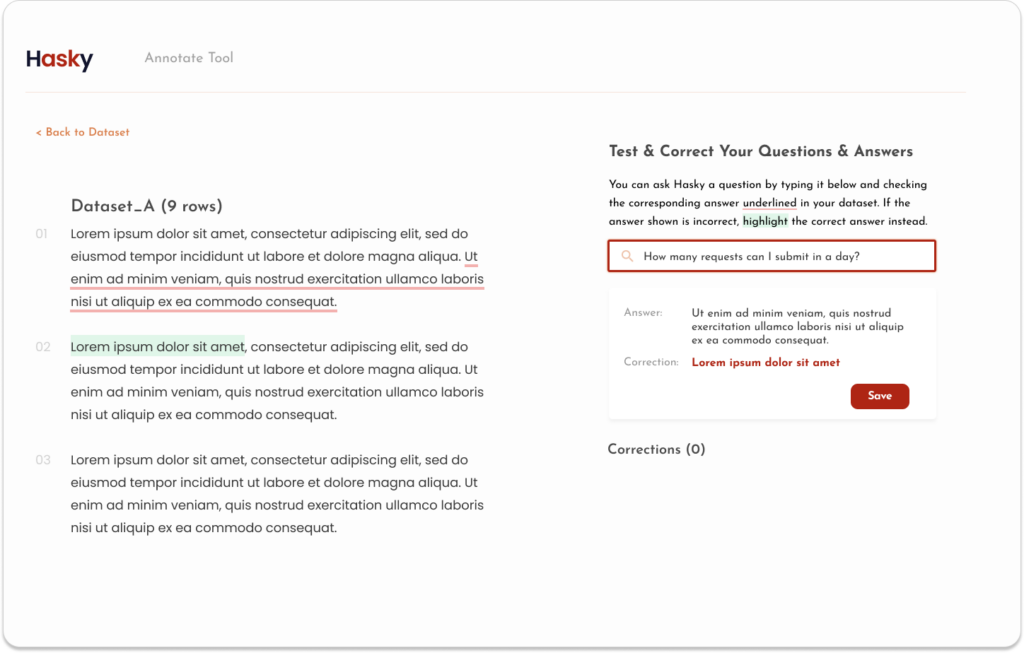

Make it easy to teach the model

The Hasky Annotation tool was built with an intention to quicken and smoothen the process of labelling data. Instead of traditional methods of using a spreadsheet to correct specific chunks of text, you could instead, highlight the word, sentence, or paragraph that you would like to correct.

With one click and drag, this highlighting process makes it faster for labellers to locate and specify which part of the data they want corrected.

In the original design, shown below, the workflow for correcting a specific label was:

- Ask a question

- Review top answers from the dataset provided by the model

- Locate the correct answer and highlight it

However, this task was not clearly communicated to the user. Explanations were lacking and there was no direction given on what the first step to take was. Moreover, once a question was asked, “Select” buttons would appear at the side of each cell which baffled users.

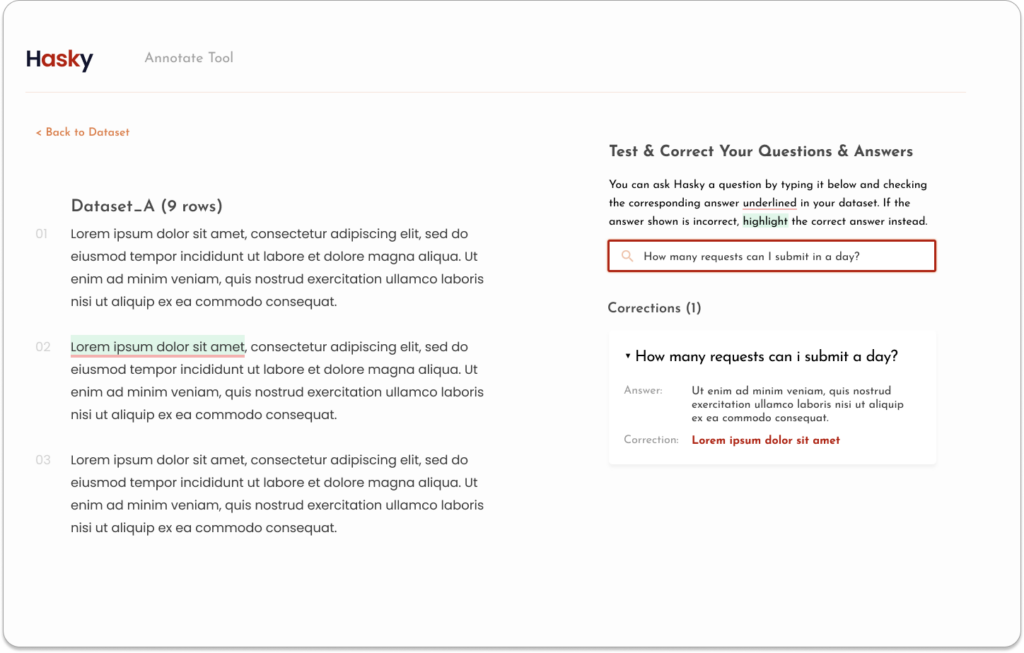

In the new design, this entire workflow of teaching the model was moved onto its own section.

Explanations are clearly written, and the text box for the input of questions is highlighted and in focus.

Use colours to signify correct/incorrect states

Provide a history of corrections

In the original Hasky Annotation tool, there was a lack of feedback when an answer was corrected and sent back to the model. There was also no way to see a history of corrections.

This is implemented in the new design where each correction would be saved in an expandable section.